Using Python, NHS open data (NHS Choices), and Mongo: for example, getting the name and the web sites of all the providers in the Wigan area (why Wigan? No idea, just that their football team seems to be pretty bad recently defeated Arsenal, and the name stuck with me).

Start the database: go to the bin subdirectory of the install directory, and type mongod –dbpath .\

I will connect to the database using the Python API (pymongo).

NHS choices offers several health data feeds:

- News

- Find Services

- Live Well

- Health A-Z (Conditions)

- Common Health Questions

As mentioned, I will use the second; to access it, you need to get a password and a login (apply for one here).

The basic Python code to query for providers and extract their names and web addresses is this:

First, build a list of services, as per the NHS documentation (the service code and the location are two required parameters):

services = [[1, 'GPs'], [2,'Dentists'], etc]

Then, query the web service:

for x in range(0, len(services)):

endpoint='http://www.nhs.uk/NHSCWS/Services/ServicesSearch.aspx?user=__login__&pwd=__password__&q=Wigan&type=' + str(x)

usock=urllib.urlopen(endpoint)

xmldoc=minidom.parse(usock)

usock.close()

nodes = xmldoc.getElementsByTagName("Service")

for node in nodes:

website = node.getElementsByTagName("Website")

name = node.getElementsByTagName("Name")

if website[0].firstChild <> None:

xmldoc.unlink()

The response will have a 3-item dataset, the service type, the provider name, and the web site (if one exists).

Mongo is a bit different in that the 'server' does not create a database physically until something is written to that database, so from the console client (launch, in \bin\: mongo) you can connect to a database that does not exist yet (use NHS in this case will create the NHS database - in effect, it will create files named NHS in the current directory).

Creating the 'table' from the console client: NHS = { service : "service", name : "name", website : "website" };

db.data.save(NHS); will create a collection (similar to SQL namespaces) and save the NHS table into it. The mongo client uses JavaScript as language and JSON notation to define the tables.

To access this collection in Python:

>>> from pymongo import Connection

>>> connection = Connection()

>>> db=connection.NHS

>>> storage=db.data

>>> post={"service" : 1, "name" : "python", "website" : "mongo" }

>>> storage.insert(post)

Here is the full code in Python to populate the database:

import urllib

from xml.dom import minidom

from pymongo import Connection

print "Building list of services..."

services = [[1, 'GPs'], [2,'Dentists'], [3, 'Pharmacists'], [4, 'Opticians'], [5, 'Hospitals'], [7, 'Walk-in centres'],[9, 'Stop-smoking services'], [10, 'NHS trusts'], [11, 'Sexual health services'], [12,' DISABLED (Maternity units)'], [13, 'Sport and fitness services'], [15, 'Parenting & Childcare services'], [17, 'Alcohol services'], [19, 'Services for carers'], [20, 'Renal Services'], [21, 'Minor injuries units'], [22, 'Mental health services'], [23, 'Breast cancer screening'], [24, 'Support for independent living'], [26, 'Memory problems'], [27, 'Termination of pregnancy (abortion) clinics'], [28, 'Foot services'], [29, 'Diabetes clinics'], [30, 'Asthma clinics'], [31,' Midwifery teams'], [32, 'Community clinics']]

print "Connecting to the database..."

connection = Connection()

db = connection.NHS

storage = db.data

print "Scanning the web service..."

for x in range(0, len(services)):

print '*** ' + services[x][1] + ' ***'

endpoint='http://www.nhs.uk/NHSCWS/Services/ServicesSearch.aspx?user=__login__&pwd=__password__&q=Wigan&type=' + str(x)

usock=urllib.urlopen(endpoint)

xmldoc=minidom.parse(usock)

usock.close()

nodes = xmldoc.getElementsByTagName("Service")

for node in nodes:

website = node.getElementsByTagName("Website")

name = node.getElementsByTagName("Name")

namei = name[0].firstChild.nodeValue

if website[0].firstChild <> None:

websitei = ' ' + website[0].firstChild.nodeValue

else:

websitei = 'none'

post = { "service" : x, "name" : namei, "website" : websitei }

storage.insert(post)

xmldoc.unlink()

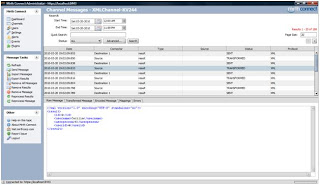

To see the results from the Mongo client:

> db.data.find({service:5}).forEach(function(x){print(tojson(x));});

Will return all the hospitals inserted in the database (service for hospitals = 5); the response looks like this:

{

"_id" : ObjectId("4bc062dbc7ccc10428000032"),

"website" : " http://www.wiganleigh.nhs.uk/Internet/Home/Hospitals/tlc.asp",

"name" : "Thomas Linacre Outpatient Centre",

"service" : 5

}

Next, it might be interesting to try this using Mongo's REST API, and perhaps to build a GoogleApp to do so.